Projects

Table of Contents

The projects here are all open-source software I have developed or lead, ranging from production codes that run on the world’s largest GPU systems to personal Rust libraries built to scratch a specific itch. They are connected: the nonlinear solver library feeds the crystal mechanics library, which feeds the finite element code, and the Rust tools grew from the same workflow demands the C++ codes created. Each project page goes into the design decisions, the engineering tradeoffs, and the story of how the code got to where it is.

LLNL Open-Source Projects#

These three C++ projects are developed at LLNL and form an interconnected stack. All are GPU-capable and have been deployed at full exascale scale as part of the DOE Exascale Computing Project.

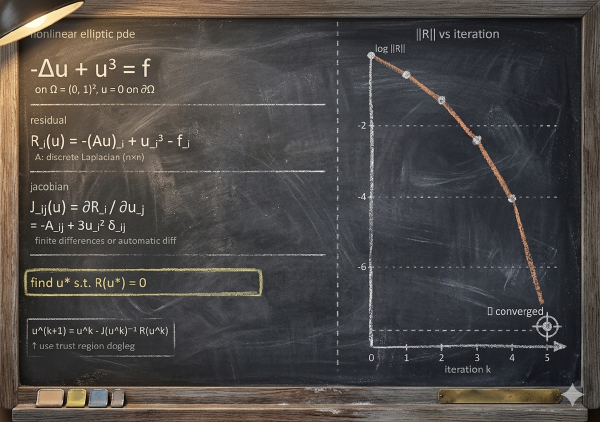

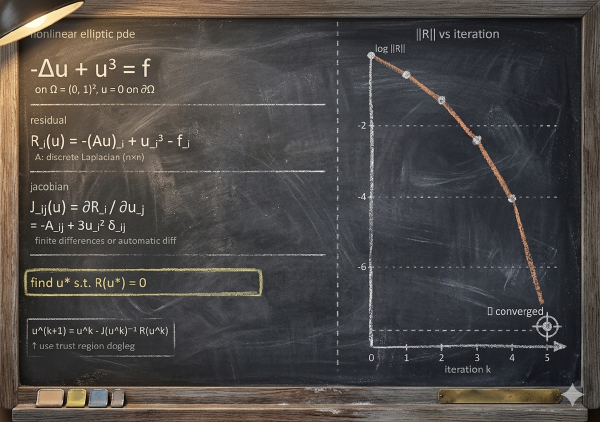

SNLS: A Small Nonlinear Solver Library: A compact library of robust nonlinear solvers and GPU-portable infrastructure built for the small dense systems that appear constantly in physics and material model constitutive updates. SNLS was the foundation that everything else in the stack is built on top of, and its design story is one of steady accumulation: batch solvers, a hybrid trust-region method, C++17 modernization, and portable memory management, each added in response to what the work actually demanded.

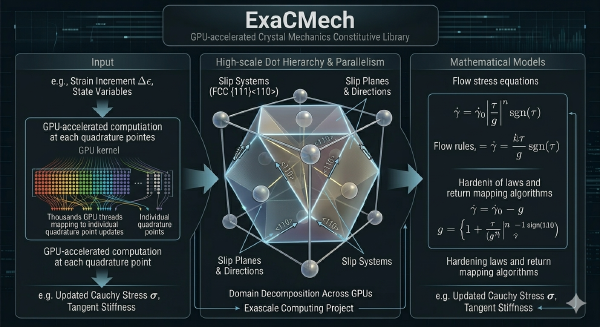

ExaCMech: GPU-Native Crystal Plasticity Constitutive Library: A library of crystal mechanics constitutive models for FCC, BCC, and HCP metals, designed from the start to run on GPUs without rewriting the physics for each hardware platform. The interesting engineering here is in the fused-kernel approach, the template-based model composition that keeps compile-time structure explicit, and the Python and JAX interfaces that let developers prototype new model forms without going through the full C++ implementation cycle.

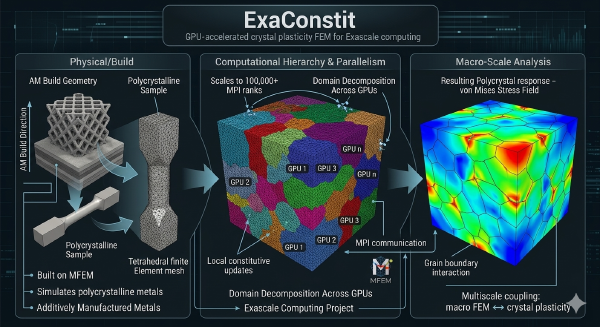

ExaConstit: High-Performance Micromechanics Finite Element Code: LLNL’s open-source crystal plasticity finite element code for simulating how polycrystalline metals deform at the grain scale. ExaConstit was built from scratch for GPU execution, scales to hundreds of thousands of MPI ranks, and has been the primary simulation engine for the ExaAM additive manufacturing pipeline. The project page covers the FEM framework, the multi-material architecture, the UMAT interface, lattice strain post-processing, and the open-source philosophy behind how the code is developed and shared.

Rust Scientific Computing#

A collection of Rust libraries built up since 2018, each one addressing a specific gap encountered while doing the actual simulation work. They range from foundational data I/O to crystallographic orientation math to nonlinear solvers, and two of them live inside ExaConstit itself as workflow crates.

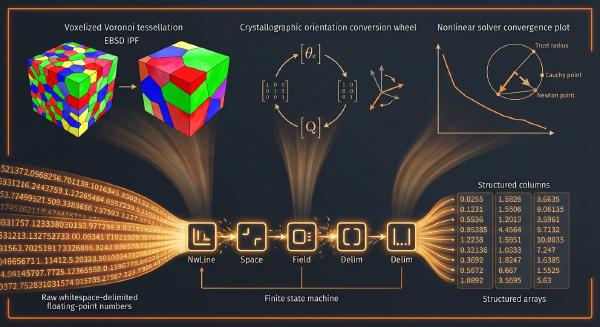

Rust Scientific Computing Stack: A single project page covering the full set of personal and ExaConstit-embedded Rust crates: mori for crystallographic orientation representations, rust_data_reader for fast whitespace-delimited scientific file parsing, voxel_coarsen for ExaCA microstructure preprocessing, xtal_light_up for diffraction-based post-processing, and HelixSnail for small nonlinear solvers. The page tells the story of how each one came to exist and what is technically interesting about how it works.