Where It Started#

SNLS predates both ExaCMech and ExaConstit. It was developed at LLNL specifically to solve the problem of running material model constitutive updates on the GPU, at a time when no existing nonlinear solver library was designed to do that. General-purpose solvers like MINPACK or PETSc are built for problems that live on the host. They carry significant overhead per call and are not structured for batched execution across millions of independent points simultaneously. In crystal plasticity specifically, every quadrature point in the mesh needs its own nonlinear solve to update stress and internal state at each time step, and those systems are dense, stiff, and need to run as fast as possible without any per-point failure being an option. That was the gap SNLS was built to fill.

The name is deliberately modest: Small NonLinear Solver. The emphasis on small is the whole point. For systems with five to eight unknowns, which is the practical sweet spot for most constitutive and physical models, direct factorization of the Jacobian is both correct and affordable. What is not affordable at these sizes is the overhead of calling into a general-purpose solver. SNLS is designed to live inside the device kernel, stack-allocate everything, and unroll completely at compile time. I came on as a developer some time after the library’s initial release, and from that point drove a series of redesigns that expanded it well beyond its original scope. The story of those redesigns is really the story of what turned out to be missing as SNLS was put to harder and harder use.

The Original Solvers#

When I came on, SNLS had two solvers at its core, and importantly both were designed to be GPU-callable from day one. You could drop either of them directly into a GPU kernel and they would work. The first, NewtonBB, is a 1D Newton solver with a bisection fallback. When the zero of a scalar function can be bounded, it converges even for functions that behave badly in the Newton sense, oscillating between bisection and Newton steps as needed to combine robustness with speed. In practice it handles scalar inverses that appear inside constitutive models, where analytical bounds are often available and Newton alone can oscillate.

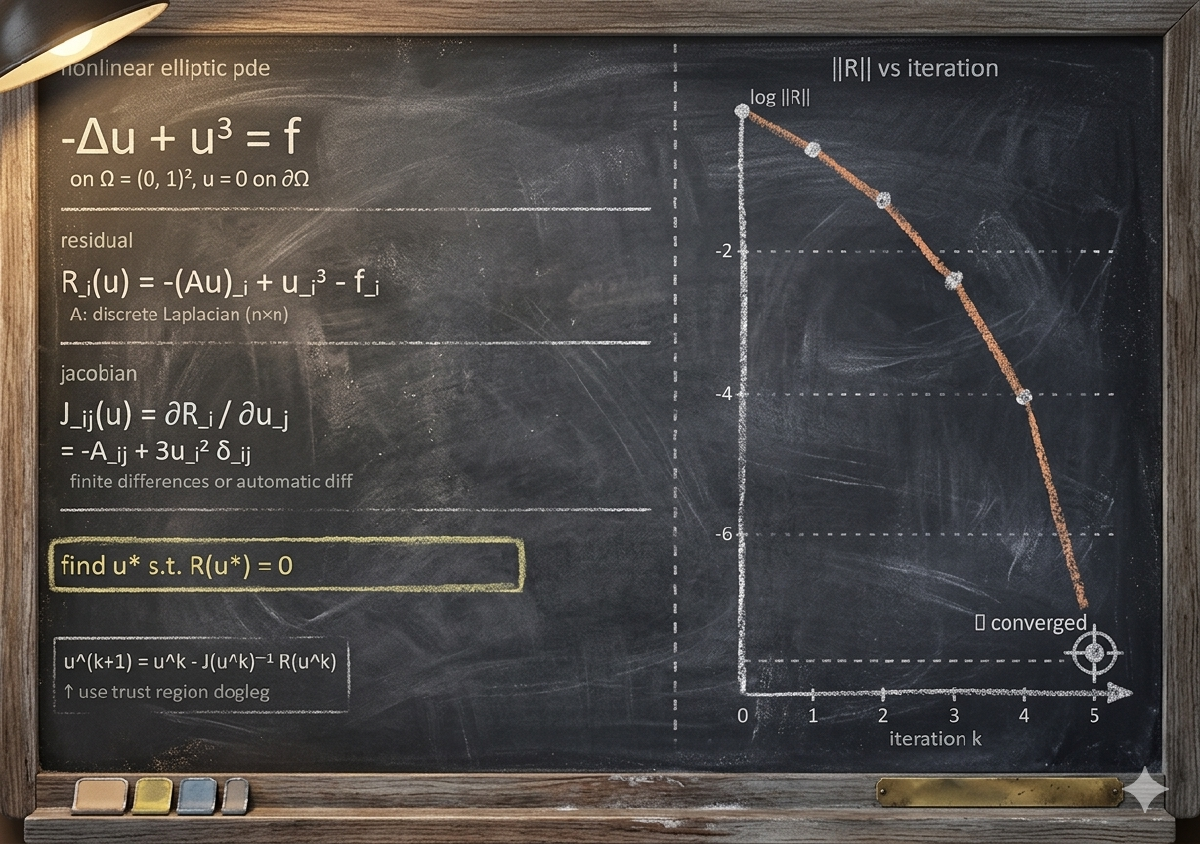

The second, SNLSTrDlDenseG, is the multidimensional workhorse: a trust-region dogleg solver for dense, general Jacobian systems. The dogleg method blends the steepest-descent Cauchy step with the Newton step based on whether the Newton step fits inside the current trust radius. This gives global convergence guarantees near a bad initial guess while recovering fast quadratic convergence near the solution, without paying the cost of a full trust-region subproblem solve at every iteration. It has proven to be the right choice for constitutive update problems because it handles the nonlinearity of the slip system equations robustly without the convergence fragility that pure Newton can show in difficult stress regimes. The solvers were solid, but the first thing I needed to build that didn’t yet exist was a way to run them over an entire batch of material points simultaneously on the GPU.

Building the Batch Solver and What It Taught Me#

A user came to me needing a batch version of the dogleg solver. Their use case required running the solve over a large collection of points at once, and the overhead of doing it point by point was too slow for their needs. I built SNLSTrDlDenseG_Batch to address that, and once it was working and delivering meaningful speedups for their problem, I also used it as a way to test something I had been skeptical about: the conventional GPU programming advice to decompose work into many small, focused kernels.

To test this properly, I built a version of ExaCMech that used the batch solver internally and broke the library’s normally fused per-point constitutive update into many separate kernel launches. Using the ExaCMech miniapp, I then systematically worked out which parts of the batch solver kernels needed to be fused together and what the best practices were for structuring problems for the batch approach. The answer was unambiguous: the fused approach for ExaCMech was still faster, by about 2.5x. The intermediate values generated during the iterative solve are local to one material point and never needed by any other. Writing those to global GPU memory between kernel launches and reading them back introduced bandwidth costs that no amount of kernel-level simplicity could offset. The batch work was not wasted, though. Building it gave me a much clearer picture of where the library’s infrastructure needed to grow.

As part of the batch solver development, I also created the initial versions of what would become the library’s memory management and portability abstractions. At that stage, the forall abstraction was simply a macro that let you specify how many GPU thread blocks to use per kernel call, modest compared to what it would eventually become, but a first step toward not hard-coding those decisions everywhere. With the batch work complete and the fused kernel approach validated, the next thing that needed attention was a problem that had been surfacing in ExaCMech work.

The Hybrid Solver#

The Orowan dislocation density model in ExaCMech, a more physically detailed hardening model that evolves dislocation densities on each slip system individually rather than tracking a single aggregate, was producing convergence failures in the standard dogleg solver under certain loading conditions. I suspected an alternative solver strategy might handle it better, which led me to develop SNLSHybrdTrDLDenseG, a hybrid trust-region solver inspired by MINPACK’s version of Powell’s hybrid algorithm. Powell’s method maintains a QR factorization of the Jacobian and applies rank-1 updates between full Jacobian evaluations, reusing curvature information to reduce expensive function evaluations. The SNLS hybrid blends this rank-1 update strategy into the dogleg trust-region framework.

As part of building the hybrid solver, I also created the library’s linear algebra abstractions, added the QR solver in SNLS_qr_solve.h (the LU solver had been around from the beginning), and simplified SNLSTrDlDenseG so that it and the hybrid solver could share common infrastructure rather than duplicating logic. Whether the hybrid actually performs better than the standard dogleg on any given problem is case-dependent. Sometimes it converges where the dogleg stalls; sometimes it is the one that fails. It is best understood as an alternative to try rather than an upgrade. When both solvers struggle, that is usually a signal to look elsewhere: simplifying the formulation, reformulating the problem, or sub-cycling are often more productive than tuning solver parameters. This period of work (batch solver, infrastructure foundations, hybrid solver, and linear algebra layer) was roughly when I was officially given the lead developer role. The next major push came later, when project needs created an opportunity to modernize the library more broadly.

C++17 Modernization and Infrastructure Expansion#

When new project needs required SNLS’s abstraction layers to grow significantly, I decided it was also the right time to modernize the library to take full advantage of C++17. Template argument deduction for class templates lets the compiler infer the CRJ type from the constructor argument, eliminating redundant template parameter lists that previously cluttered call sites. The test suite benefited particularly from structured bindings, if constexpr, and cleaner lambda capture semantics, which allowed many test cases to be rewritten in a shorter and clearer form.

One modernization that did not survive was auto return types on device-callable functions. The C++ standard permits them; NVCC does not. Clang-CUDA and AMD’s hipcc handle them without complaint, which creates an asymmetry worth knowing: if you are writing device-callable code and want full C++17 fidelity, your compiler choice matters as much as your language standard flag.

At the same time, the forall abstraction that started as a simple thread block macro grew into snls::forall(), a proper uniform API for launching parallel loops across CPU serial, OpenMP, and GPU backends. A loop body is written once as a lambda and the infrastructure routes it to the appropriate RAJA execution policy at runtime based on a backend selection stored in a Device singleton. Each call is now templated on the number of GPU thread blocks, can be specified as asynchronous (returning a RAJA::resources::Event that can be polled or waited on later), and can accept a CAMP resource to specify which GPU stream to use for concurrent kernel execution. The snls::forall_strat() variant lets the execution strategy be specified per-call rather than globally, which matters when most of a code runs on the GPU but a particular loop needs host access. This level of control makes it straightforward to pipeline work across streams without restructuring the surrounding code. Memory management grew alongside the forall abstraction.

snls::memoryManager sits on top of LLNL’s Umpire library. Umpire abstracts over the full memory hierarchy across hardware vendors, and every allocation carries metadata about which memory space produced it. Umpire’s QuickPool strategy pre-reserves a large block and sub-allocates from it, eliminating the per-call overhead that individual device allocations would otherwise incur. The coherence side is handled by LLNL’s CHAI library, which returns chai::ManagedArray<T> objects that track where data lives and automatically migrate it when accessed in a different execution context. When all three layers work together, the result is code that allocates, computes, and transfers data across the memory hierarchy without a single explicit copy operation in the application layer.

Making Batch Kernels Readable: SubView#

Also during this modernization work, I added the SubView class, which addresses an ergonomic problem that comes up constantly in batch kernel development. All your data is indexed globally across thousands of points, but your algorithm thinks locally within one point’s matrix or vector. Writing kernels that interleave those two indexing levels is error-prone, and because the errors are data-dependent, they often pass unit tests while corrupting results on production problem sizes.

SubView takes any RAJA View (or another SubView, since the class composes recursively) and a point index, and produces a view of one lower dimension that has already absorbed that index into its layout. A SubView of a 3D batch array [point, row, col] is a 2D view into one point’s matrix. A SubView of that 2D view is a 1D row. Once you have your SubView at the point level, the global index simply disappears from your code and you write the local algorithm against a local-looking array. The sliding window capability extends this for algorithms that need to march through a contiguous segment of local data in stages, updating the offset in place rather than maintaining an explicit counter. Together these features make batch kernel code substantially easier to read, verify, and hand off to someone who did not write it.

Where SNLS Fits in the Broader Ecosystem#

SNLS is a small library, and that smallness is genuinely one of its most useful properties. Because it is self-contained with minimal dependencies at its core, it compiles quickly, tests quickly, and can be evaluated on a new machine or compiler in an afternoon. That makes it an excellent first destination for infrastructure experiments: a new forall abstraction or memory manager pattern can be prototyped and validated in SNLS’s lightweight environment before a proposal to upstream the same pattern into RAJA or CHAI requires the full weight of those projects’ review and integration processes. In a few cases, patterns developed in SNLS have made their way into exactly those larger libraries, which is a kind of validation that is hard to manufacture deliberately.

At the same time, SNLS is embedded in production simulation codes where its solvers and infrastructure run at scale every day. That production exposure keeps the library honest: abstractions that work in a synthetic test but fail to perform or compose correctly under real workloads get found quickly, and fixes propagate to all downstream consumers simultaneously. The modernization work on SNLS also had a broader effect: the patterns and lessons from that effort opened the doors for ExaCMech’s refactoring, which in turn fed directly into ExaConstit’s major refactoring that followed. For a library measured in thousands of lines rather than millions, the reach it has had in both the infrastructure ideas it has seeded and the simulations it quietly powers has been larger than I would have expected when I first started working on it.